Partial Automation: A Human-Centric Approach to Artificial Intelligence and a foresight for the future

Approach to Artificial Intelligence and a foresight for the future

Explore partial automation: a human-centric AI approach. Discover how it’s shaping the future with strategic foresight and responsible artificial intelligence.

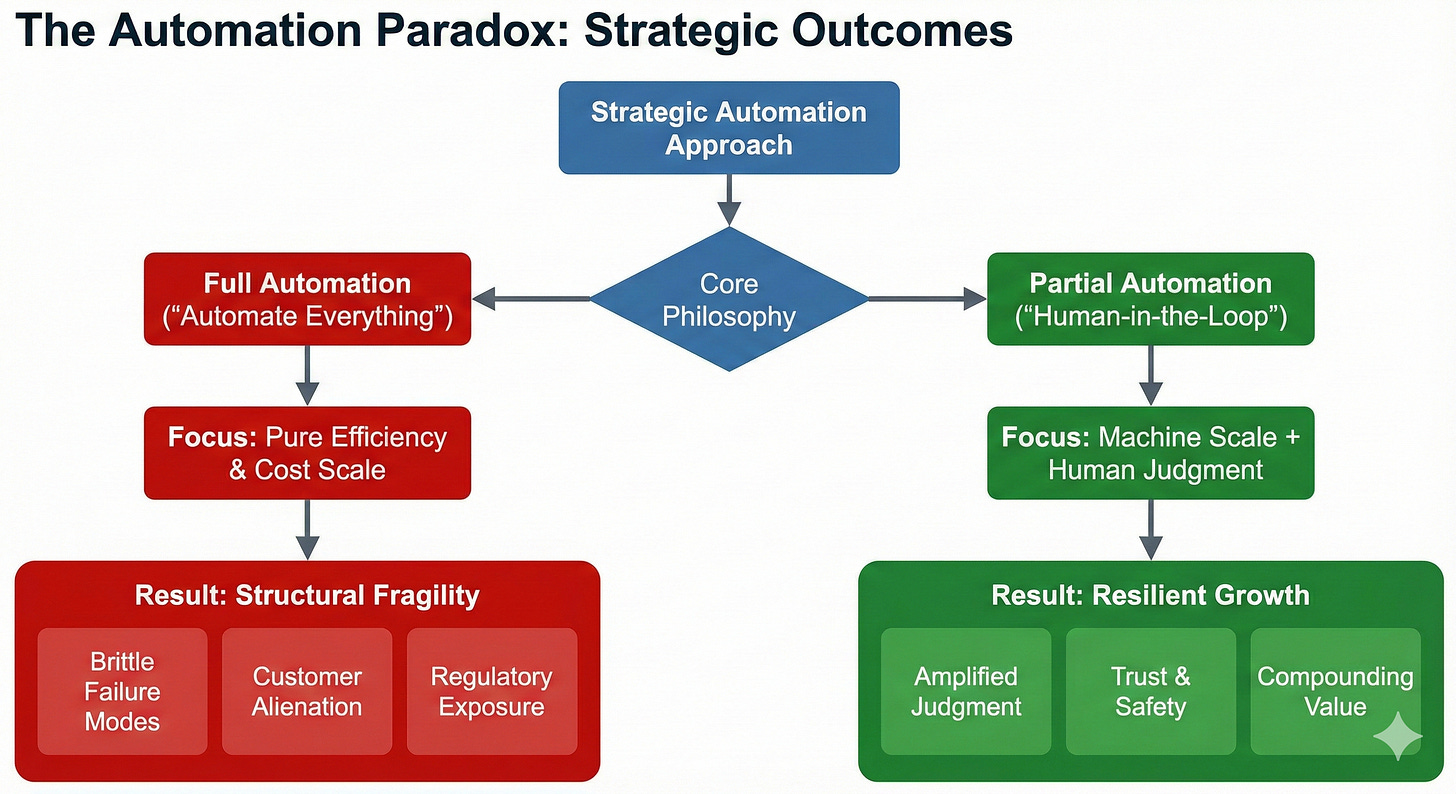

Efficiency without humanity creates fragility. In 2025 and beyond, the real competitive edge for organizations will not come from automating everything. Instead, it will emerge from knowing precisely where human judgment must remain central—and designing systems so that people never become mere safety nets (Klarna, 2025).

Partial automation is not simply a compromise—it is a strategic, future-proof choice that amplifies human judgment, protects social cohesion, and compounds organizational and societal value. The mandate for leaders is clear: design AI systems where human oversight is the operating principle—not merely a failsafe (Abulibdeh, 2025).

Introduction

Debates about automation are no longer confined to technical circles; they have become civic, economic, and cultural imperatives. Full automation promises scale and cost reductions, yet it too often comes coupled with brittle failure modes, customer alienation, regulatory exposure, and sweeping workforce displacement. Partial automation, on the other hand—a model wherein machines handle scale and routine pattern recognition, while humans deliver context, empathy, and accountability—offers a more resilient, balanced path (IBM, 2024).

This expanded article presents an in-depth blueprint for human-in-the-loop strategies, policy levers that align safety with full employment, and operating models designed to augment human capabilities without eroding welfare. Drawing on recent enterprise results, regulatory action, and global policy innovation, this article advocates for partial automation as the foundation for successful, responsible AI deployment.

Human-in-the-Loop: From Safety Net to Design Principle

Human-in-the-loop (HITL) is often dismissed as a temporary bridge until models “get good enough.” Such reductionism misses the point: HITL is a vital design strategy for decision systems in complex, volatile environments, not a stopgap measure, especially when considering AI technologies. While algorithms are unmatched at perception and prediction, humans excel at abstraction, ethics, and meaning-making. Both are necessary to reduce error variance, accelerate learning cycles, and build trusted customer and regulator relationships through effective AI development (ISO/IEC, 2023; Klarna, 2025).

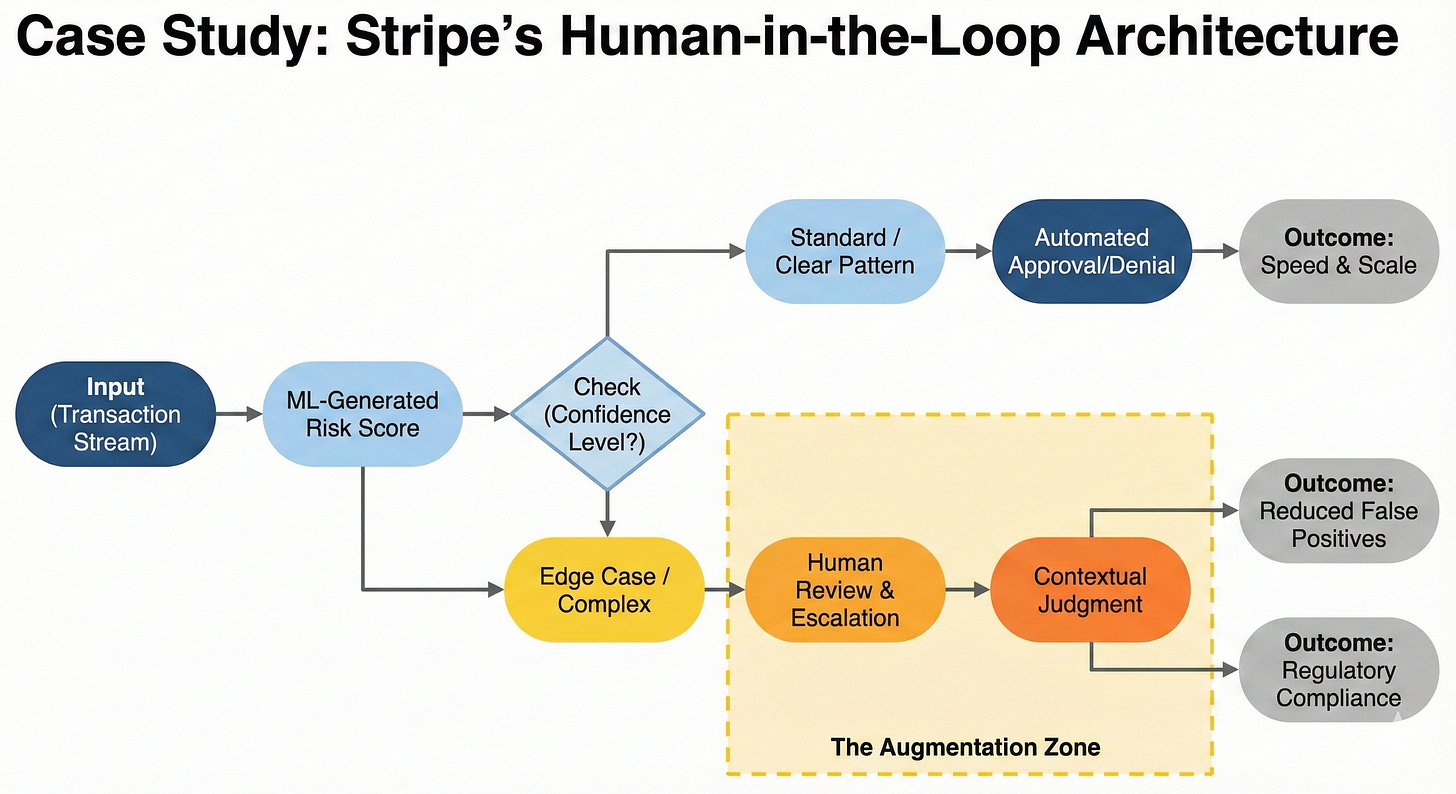

In the high-stakes arena of fintech, Stripe exemplifies the power of augmented intelligence over pure automation in fraud scoring. Rather than relying solely on black-box algorithms, Stripe has pioneered an ensemble approach that combines machine learning-generated risk scores for the majority of transactions with critical human review and escalation for complex edge cases. This human-in-the-loop methodology sharply reduces false positives while robustly protecting merchant quality, customer trust, and regulatory compliance, underscoring that human augmentation remains vital even in deep learning-dominated domains (Stripe, n.d.).

Healthcare is a domain where HITL is non-negotiable. The U.S. FDA’s approach to AI/ML-enabled medical devices emphasizes real-world monitoring, algorithmic transparency, and stringent human-in-the-loop controls—especially vital for systems that adapt and learn post-launch. Peer-reviewed analysis shows these strategies are critical to preventing bias drift, ensuring safety, and cultivating public trust in clinical outcomes (Abulibdeh, 2025).

What Goes Wrong If We Ignore Partial Automation: Systemic Pitfalls and Failure Modes

When organizations chase full automation as an end in itself, they accelerate exposure to cascading failure modes that are technical, economic, and political. Hallucinations and brittle reasoning in large language models (LLMs) are not mere nuisances; they are structural risks when models are granted operational authority without human oversight. Studies and industry postmortems show models confidently produce factually incorrect outputs, misclassify low-probability but high-impact cases, and fail to surface uncertainty in ways meaningful to human decision-makers (Gary Marcus, 2024; Nautilus-style critiques on deep learning limits). At scale, these errors compound: misrouted invoices, wrongly approved claims, bad medical suggestions, and regulatory breaches create legal and reputational liabilities that far outweigh short-term operational savings. This is why partial automation treats human judgment as a persistent control variable, not an ephemeral fallback (Abulibdeh, 2025; ISO/IEC, 2023).

Contrasting the technical failures, broader economic dynamics amplify risk. The “goldrush” reflex—sell shovels rather than build durable products—has flooded the marketplace with infrastructure investments (data centers, GPUs) and speculative startups that optimize model size and throughput instead of utility and labeled-data quality. The result: oversupply of compute, underinvestment in high-quality labeled datasets, and a glut of poorly validated point solutions (agenty apps, “automate-everything” platforms) that cannot handle practical file types or enterprise workflows. Many app startups face two correlated financing problems: (1) managers hesitate to fund projects with short technical half-lives; (2) investors see fragile unit economics where constant retraining and frequent infra upgrades eat margins. The endgame: diminishing returns from scale (end of naive scaling laws), high churn of vendors, and a market where only a few providers deliver durable, product-market-fit AI services (Marcus critiques; industry trend reports). Practical implication: without partial automation, firms risk high-cost technical debt, regulatory conflict, and a lost decade of faith in AI-driven transformation (Forbes/industry analyses; FTSG, 2025).

Examples and links: critiques on LLM ceilings and scaling problems (Gary Marcus and related essays) and debates on deep learning limits (Nautilus-style analysis) illustrate the technical argument:

and https://nautil.us/deep-learning-is-hitting-a-wall-238440/ . For industry consequences and economic dynamics, see tech trend reports and foresight briefs: https://ftsg.com/wp-content/uploads/2025/03/FTSG_2025_TR_FINAL_LINKED.pdf and ISO/IEC guidance on risk control: https://www.iso.org/standard/77304.html.

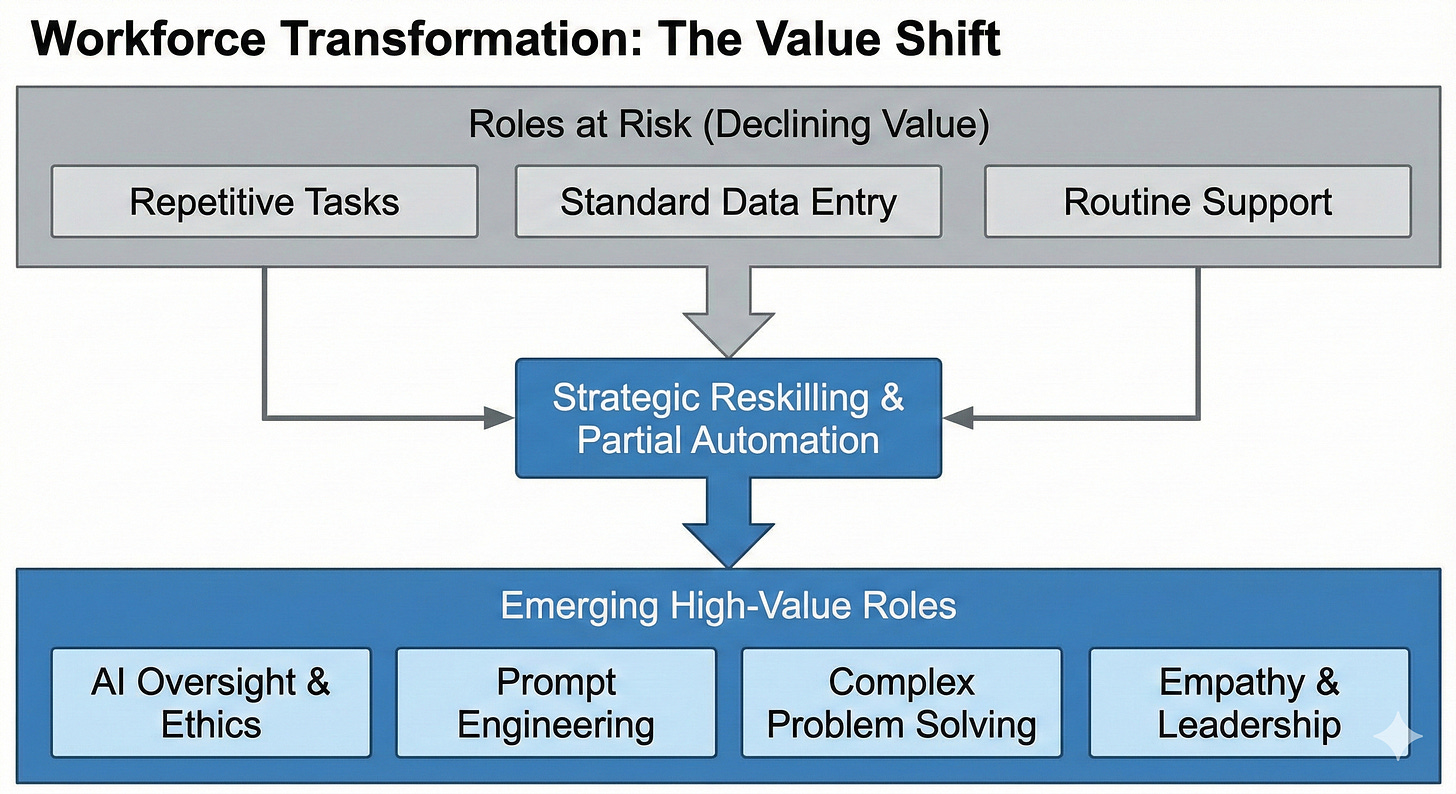

What Jobs Is AI Taking Over?

The accelerated adoption of artificial intelligence (AI) is transforming the job market across sectors. Roles characterized by repetitive tasks, standard data processing, and routine decision-making—especially in manufacturing, administrative support, warehousing, and customer service—are increasingly susceptible to automation. AI technology is now able to perform these functions with greater speed, accuracy, and consistency than human workers, prompting many organizations to restructure their workforces (Intoo, 2025).

Recent analyses project that by 2030, up to 30% of U.S. jobs could be automated, and globally, positions such as data entry clerks, telemarketers, and basic customer service representatives are among the first to be impacted. Modern AI-driven chatbots and warehouse automation systems are not only replacing task-based roles but reshaping business models—even fast food restaurants have implemented kiosk-driven order systems and robotic food preparation (Forbes, 2025; Vktr, 2025).

Importantly, the rise of AI does not result in universal job loss. While certain categories of work become obsolete, new roles emerge in AI oversight, development, prompt engineering, maintenance, ethics, and systems integration. Professions demanding emotional intelligence, creative vision, and complex problem-solving are becoming even more valuable as AI’s scope expands, with leadership and communication now regarded as top-tier skills in hiring for 2025 (Autodesk, 2025). As hybrid human-AI teams become the norm, organizations must balance the efficiency of automation with the irreplaceable qualities humans bring to collaborative, high-context environments.

The implications for workforce strategy are clear: partial automation supports human-AI collaboration and enhances productivity, whereas full automation may cause entire job categories to disappear. Companies must assess their approach carefully, deploying technology to augment and complement human potential wherever possible (Intoo, 2025; Autodesk, 2025).

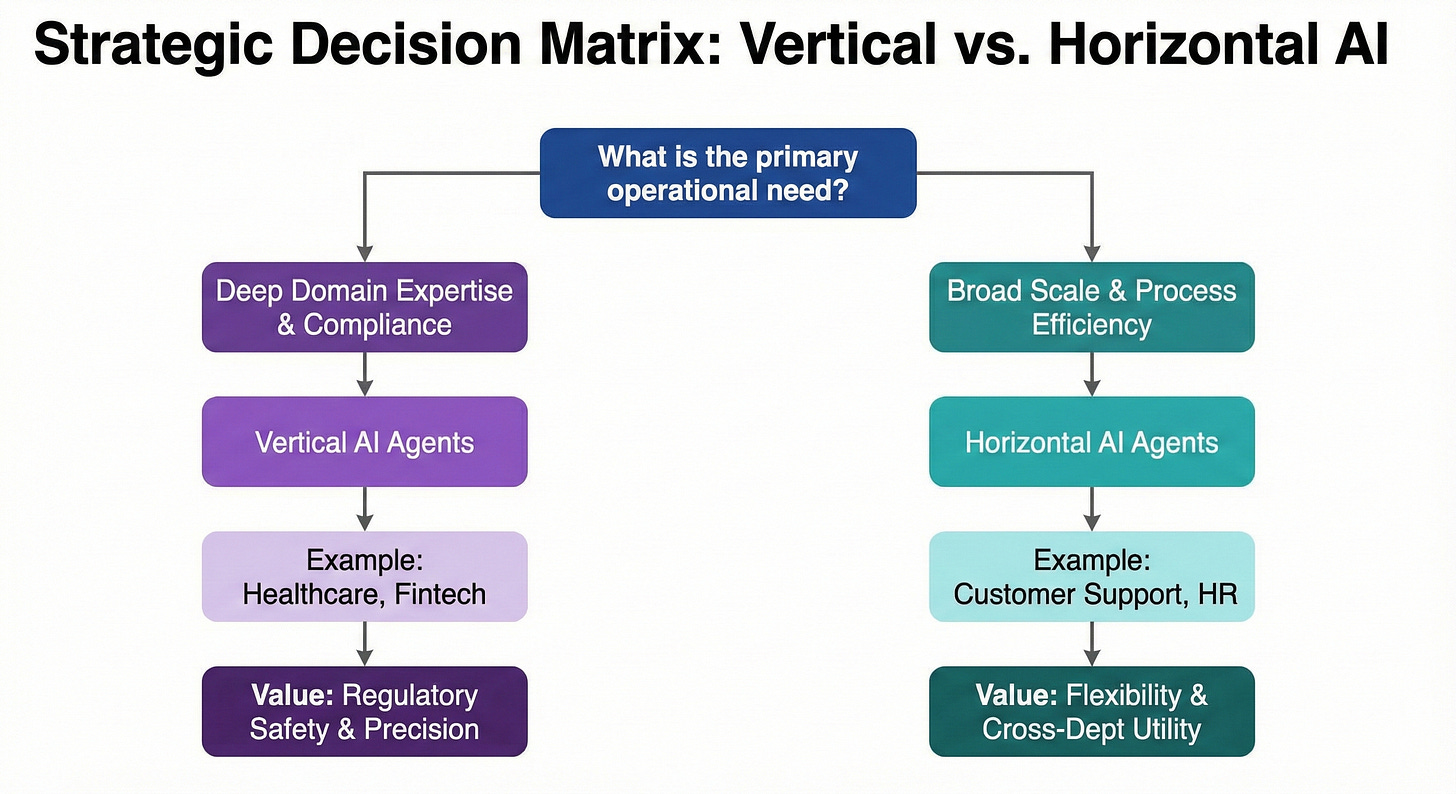

Vertical AI vs. Horizontal AI Agents

In the evolving landscape of artificial intelligence, understanding the distinctions between vertical and horizontal AI agents is crucial for organizations seeking optimal automation strategies. Vertical AI agents are specialized systems crafted to address tasks and challenges specific to a given industry—such as healthcare, finance, or manufacturing. These agents leverage deep domain knowledge, allowing them to deliver regulatory-compliant, precise solutions tailored to sector requirements (Multimodal.dev, 2025).

Horizontal AI agents, by contrast, are generalist systems applied across multiple industries and operational domains. Their strengths lie in flexibility and scalability: horizontal agents are ideal for broad applications such as customer support, data analytics, or process automation, though they may lack the deep specialization required for complex, industry-specific problems (Digital Divide Data, 2025).

Choosing between vertical and horizontal AI depends fundamentally on the organization’s strategic priorities and how they plan to use AI in their operations. Companies facing specialized regulatory, technical, or workflow challenges—in healthcare diagnostics or securities trading, for example—are likely to benefit most from vertical AI’s depth and precision. Firms desiring scalable solutions adaptable to new use cases across the enterprise may prefer the reach and flexibility of horizontal AI agents (Interview Kickstart, 2025).

The distinction matters acutely in automation planning. Partial automation frequently yields outsized benefits for vertical AI, where human oversight complements machine-driven expertise for complex decisions and compliance. Full automation can be more seamlessly aligned with horizontal AI technologies, driving efficiency in standardized, cross-cutting processes and enhancing overall ai capabilities. The most effective AI foresight integrates an understanding of these types, ensuring technology strategy fits the industry’s operational and compliance demands (Multimodal.dev, 2025; Digital Divide Data, 2025).

Partial Automation: A Human-Centric AI Approach

As artificial intelligence (AI) continues to advance, partial automation emerges as a strategic imperative, blending the computational power of AI with the irreplaceable value of human oversight. Rather than relinquishing control to fully autonomous systems, organizations are increasingly integrating AI into decision-making workflows while maintaining human agency and accountability—a practice now recognized as essential for responsible AI adoption (TechDispatch, 2025; Vidizmo, 2025).

Strategic foresight in AI deployment has become non-negotiable as the pace of technological change accelerates. Organizations that actively anticipate future scenarios in automation are better equipped to seize opportunities and mitigate risks. By applying strategic foresight, companies can not only identify optimal applications for AI but also ensure systems remain adaptable to evolving regulatory, ethical, and operational environments. The European Commission’s 2025 foresight report and global technology trend analyses both stress the importance of well-prepared, future-oriented AI investment and governance structures (European Commission, 2025; FTSG, 2025).

Despite the allure of end-to-end automation, the unique strengths of human judgment—emotional intelligence, ethical reasoning, and contextual understanding—remain critical in high-stakes decisions. Human oversight helps mitigate automation bias, ensures alignment with societal values, and sustains trust among end users and regulators. Regular external audits, continuous operator training, and a culture that encourages critical review of automated outputs are now widely advocated best practices for optimized AI oversight and risk management (TechDispatch, 2025; OECD, 2025).

AI’s widespread impact spans multiple domains: In healthcare, supply chain management, and logistics, AI agents increasingly automate routine data analysis, billing, and inventory control. This not only drives efficiency and cost savings but also empowers workers to focus on creative, problem-solving tasks that machines cannot easily replicate. For example, robust AI-enabled supply chains improve demand forecasting and resource allocation, while routine tasks are delegated to AI-powered virtual assistants—demonstrating that partial automation enhances both operational excellence and job quality (Simbo AI, 2025; Intellias, 2025; PMC, 2023).

Looking ahead, the successful adoption of AI depends on responsible practices throughout the AI lifecycle. Organizations must invest in transparent governance, continuous upskilling, and ethical frameworks to ensure that AI systems meet safety and reliability standards while serving organizational and societal goals (Microsoft, 2024; Responsible AI, 2025). Ultimately, partial automation is not just a technological compromise—it is a forward-thinking approach that enables organizations to harness AI’s transformative potential without sacrificing human dignity or ethical standards.

Pathologies of Governance and Finance: Corruption, Overregulation, and the Fragile Startups

Ignoring human-centric limits invites policy and market pathologies that undermine trust and innovation simultaneously. Overzealous regulation that treats every application as high-risk can create bureaucratic drag—slowing legitimate innovation—while weak or corrupted enforcement permits harmful systems to proliferate. Recent whistleblower reports and early implementation frictions around the EU AI Act signal the precise dual hazard: enforcement capture and inconsistent application across member states, which can both hamper compliance and incentivize regulatory arbitrage. Meanwhile, procurement and public sector adoption that lack transparent governance can accelerate poor deployments—embedding biased or unsafe systems into public services that are hard to unwind (EU AI Act discussions; regulatory commentary). The answer is not deregulation but calibrated governance that enforces human-in-the-loop controls where necessary and avoids blanket bans that stifle practical augmentation strategies (European Commission FORESIGHT, 2025; Covington & Burling, 2023).

Financial incentives compound the problem. Venture capital and corporate funding patterns show a bias for headline-grabbing autonomy narratives—agents, auto-everything startups, and push-button product promises—over slower, more sustainable investments in labeled data, process integration, or human-centered UX. That causes two linked failure modes: (1) many small vendors attempt to monetize agent frameworks without the engineering to handle real-world document formats (MS Office, PDFs, enterprise ERPs), and (2) enterprises grow risk-averse because early pilots are fragile and fast-obsoleting. The result is a “trial-and-abandon” cycle: pilots launch, fail in production, and executives then withhold further funding. The structural remedy is reallocated capital toward hybrid solutions and data-centric engineering—funding models that prioritize data quality, operational integration, and long-term product maintenance rather than one-off automation demos. Startups like Snorkel AI (data-centric labeling and ML ops) and human-in-the-loop platform vendors (e.g., Scale AI historically, Labelbox-like services) exemplify the alternative funding thesis that focuses on robustness and operational value rather than pure autonomy narratives (Snorkel and Labelbox case studies; industry VC analyses).

Examples and links: EU AI Act implementation commentary and foresight: https://commission.europa.eu/document/download/bdba60f0-abb3-42f8-b5be-fd35d693b289_en?filename=SFR2025-Report_web.pdf and legal/policy frames: https://www.cov.com/en/news-and-insights/insights/2023/11/biden-administration-releases-artificial-intelligence-executive-order . For data-centric startups and operational platforms: Snorkel/Open-source data-centric references and ML Ops services pages (see company sites and case studies), and historical examples of labeling and human feedback platforms—Scale AI and Labelbox public materials.

Policy That Limits Full Automation While Expanding Full Employment

Public policy can transform partial automation from a technical framework into a societal advantage: regulating high-risk autonomy, funding continuous reskilling, and ensuring productivity gains are widely shared instead of concentrated. The European Union AI Act codifies this logic: it segments use cases into risk tiers, imposing strict requirements for transparency, oversight, and robust data governance on high-impact domains while mandating human oversight and auditability. Noncompliance exposes organizations to fines up to €35 million or 7% of global annual turnover—a material incentive for globally active companies to prioritize responsible AI everywhere (IBM, 2024).

The AI Act is not the only regulatory milestone. The Biden Administration’s “Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence” mandates more than a hundred coordinated federal agency actions, including safety risk assessments, sectoral standards, privacy protections, worker impact assessments, consumer protections, and international collaboration. Legal scholars and policy experts confirm the order’s far-reaching influence on public sector procurement, safety mandates, and integrations of AI across health, labor, law enforcement, and commercial functions (Covington & Burling LLP, 2023).

Reskilling is the essential companion policy. Singapore’s SkillsFuture platform represents a durable template for channeling AI-driven productivity gains into lifelong learning and targeted upskilling—with results already reflected in a world-leading adoption rate for AI and digital skills among professionals (Leadership Institute, 2025). Amazon’s groundbreaking $1.2 billion multi-year commitment sets a powerful precedent for private sector transition support, cushioning economic shifts and future-proofing its workforce (Harness, 2025).

The throughline across jurisdictions is clear: limit full automation where societal risk is high, and reinvest savings directly into education and capability-building to maximize both full employment and upward mobility.

Augmentation First: Operating Models That Compound Human Capability

The rapid adoption of AI solutions in supply chains, logistics, real-time analytics, and software development has made augmentation—not replacement—the key to sustainable progress. Under augmented models, machines execute triage, retrieval, and pattern recognition; humans take command when stakes are high, ambiguity is thick, or ethical values must be applied.

In critical industries such as aviation maintenance, energy management, and industrial operations, firms deploy predictive models to flag anomalies, but human engineers retain decision authority—ensuring both downtime reduction and the retention of responsibility. This “controlled boundary” methodology reduces “unknown unknowns” and creates transparent accountability trails (IBM, 2024).

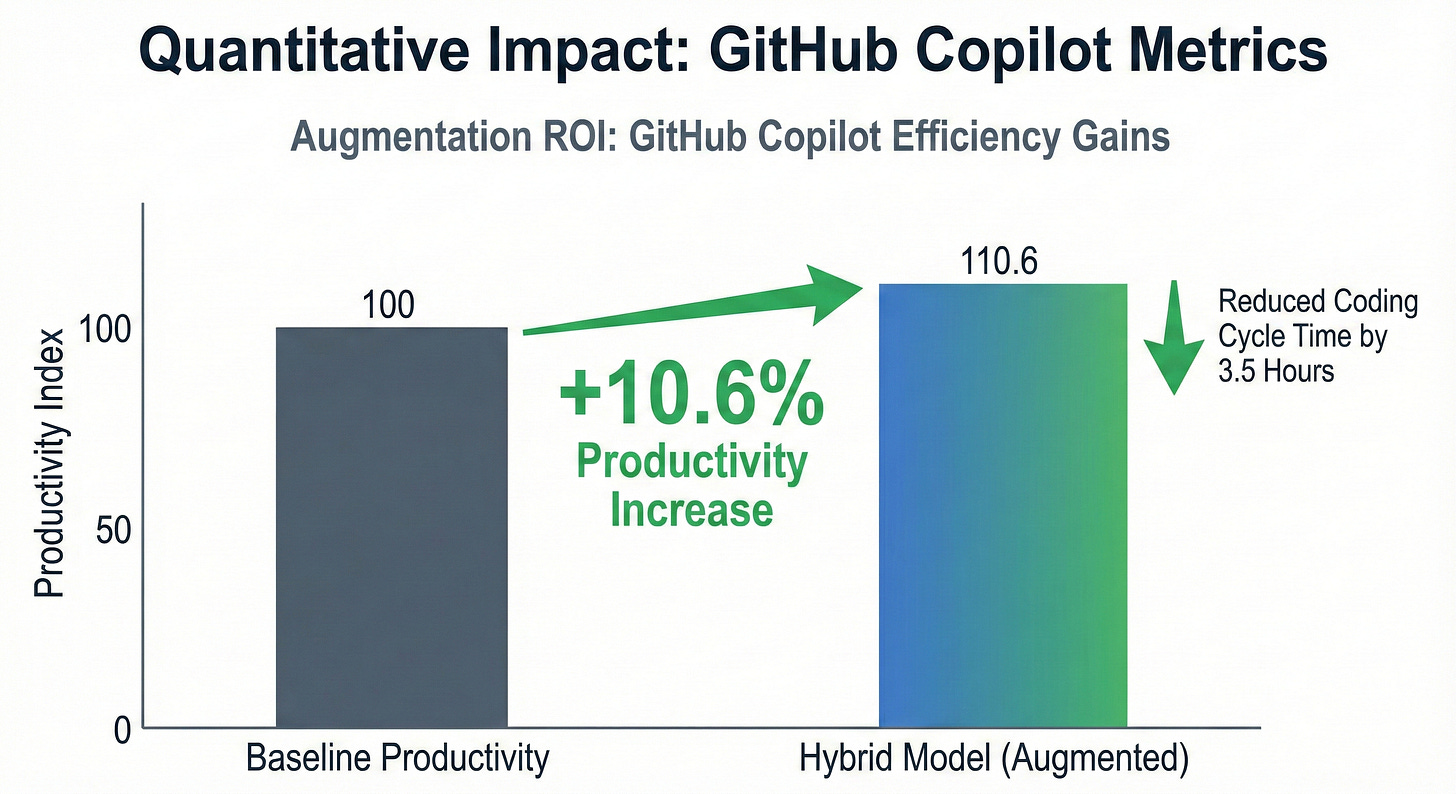

In software development, the hybrid model shines: GitHub Copilot accelerates code suggestions but teams achieve the most speed and quality when humans review, refactor, and enforce security checks. Productivity gains of 6.5–10.6% and reduced coding cycle times reflect the compounding value of augmentation over unchecked automation (Harness, 2025).

Creative, legal, and compliance workflows that attempt “end-to-end” automation often learn the hard way—short-term velocity gains can be quickly offset by reputational, legal, and operational risks, highlighting the importance of careful decision-making in AI adoption. By contrast, hybrid pipelines—AI for retrieval and drafting, human judgment for review and sign-off—not only shield against risk but create improving feedback loops. Every human correction becomes structured signal for continuous fine-tuning and organizational learning.

Governance Without Friction: Building Trust While Moving Fast

Trust is a strategic asset. Organizations that automate judiciously and govern transparently move faster in the long run because they suffer fewer catastrophic failures, learn more from human feedback, and keep regulators, customers, and top talent aligned. Here, the goal is not to impose more bureaucracy; it is to clarify boundaries and enable robust observability across workflows (ISO/IEC, 2023).

Practical governance means deploying model cards, tracking detailed data lineage, enforcing human override policies, and embedding audit trails in operational systems. ISO/IEC 23894 provides an adaptable, sector-agnostic framework for AI risk management that can be tailored to specific vertical regulations, from finance to healthcare to logistics (ISO/IEC, 2023).

Tooling advances matter: retrieval-augmented assistants, function-calling APIs, and deterministic checks grant outputs higher auditability and enable human sign-off without impeding operational velocity. Enterprise leaders like ServiceNow and Datadog are now embedding AI copilots hand-in-hand with change controls, rollbacks, and sophisticated observability features. Speed is preserved, but risks are managed (ServiceNow, 2024; Datadog, 2025).

In fintech, Stripe has pioneered an ensemble approach to fraud scoring—combining ML-generated risk scores for most transactions with human review and escalation for edge cases. This sharply reduces false positives while protecting merchant and customer quality and regulatory compliance, underscoring that human augmentation remains vital even in deep learning-dominated domains (IBM, 2024).

Blueprint for Partial Automation: Roadmap for Practitioners

Organizations seeking to operationalize partial automation should follow a structured, phased approach:

1. Identify human-critical moments: Use risk and reversibility frameworks to map workflows. For all high-stakes or low-reversibility decisions—clinical diagnoses, regulated pricing changes, financial approvals—embed mandatory human sign-off and clear escalation paths (ISO/IEC, 2023).

2. Architect for augmentation: Employ lightweight, grounded assistants, tool-use integrations, and enforce log and audit standards. Minimize unnecessary model complexity; invest in smart toolchains that can be monitored and overridden (Harness, 2025).

3. Operationalize structured feedback: Convert human interventions (labels, critiques, rubrics) into continuous improvement data. Feed corrections into fine-tuning cycles, evaluation routines, and curated playbook updates for better organizational learning (Klarna, 2025).

4. Measure what matters: Monitor cost-to-serve, CSAT/NPS, cycle times, error rates segmented by severity, model drift, and regulatory performance indicators. Ensure that automation-driven savings directly fund ongoing reskilling (Leadership Institute, 2025).

5. Govern with clarity: Mandate model cards, detailed lineage, granular access controls, and override policies. Use regional regulatory frameworks as baseline, then tailor sector-specific standards (Covington & Burling LLP, 2023).

This blueprint is not theoretical—it reflects the emerging best practices of leading enterprises across sectors.

Looking Ahead to 2025 and Beyond

The coming years will see AI expand further into domain-crossing workflows and general-purpose enterprise assistants. The responsible path is unambiguous: partial autonomy gated by human oversight, grounded outputs with traceable provenance, and workforce strategies that guarantee broad-based prosperity. The true frontier is “redesign work”—enabling people at all levels to frame problems, handle exceptions, arbitrate ethics, and drive strategic learning where machines cannot or should not tread (IBM, 2024).

With AI permeating more sectors and citizen life, leaders must acknowledge that maximizing efficiency alone will never be enough. The quality of life, work, and civic participation must be raised for all stakeholders. Inclusion, safety, and learning are essential values in the age of augmented intelligence.

Conclusion

The allure of full automation remains understandable in a business landscape driven by margins. Yet the quantitative and qualitative evidence, regulatory incentives, and emerging technical consensus all point away from vertical, end-to-end automation, suggesting that investment in horizontal AI could yield better outcomes. Partial automation, when deployed with clear governance, progressive reskilling, and augmentation-first operating models, holds the promise of compounding value for enterprises and societies alike. Human oversight must become a feature—not a flaw—if AI is to augment rather than erode welfare. The future belongs to organizations and leaders who can balance velocity, reliability, and purpose.

Challenge to Leaders

As an actionable step, leaders should select one high-impact workflow and redesign it for augmentation within the next 90 days. Map all human-critical moments, instrument grounding and tool-based checks, establish feedback loops, and publish oversight policies to optimize the use of AI tools in decision-making processes. Most importantly, reinvest 20–30% of realized efficiency gains into upskilling—ensuring teams and stakeholders are equipped to thrive in the augmented era (Klarna, 2025; Leadership Institute, 2025; Covington & Burling LLP, 2023).

Non-Obvious Insights

Partial automation reduces regulatory exposure and operational variance more than it decreases speed, turning compliance requirements into performance advantages (ISO/IEC, 2023).

Reinvesting automation benefits into structured upskilling reliably compounds organizational capability and improves machine learning through superior feedback data (Harness, 2025).

Mapping decisions by risk and reversibility clarifies when and where human oversight creates outsized ROI (IBM, 2024).

Lean models with advanced toolchains consistently deliver lower total cost of ownership and higher auditability than ambitious end-to-end automation strategies (Klarna, 2025).

Transparent governance mechanisms measurably increase organizational velocity and resilience by reducing catastrophic failure risk and regulatory drag (Covington & Burling LLP, 2023).

References (APA7)

Abulibdeh, R. (2025). The illusion of safety: A report to the FDA on AI healthcare products. PLOS Digital Health. https://pmc.ncbi.nlm.nih.gov/articles/PMC12140231/

Autodesk. (2025, July 8). AI job growth in Design and Make: 2025 report. https://adsknews.autodesk.com/pt-br/news/ai-jobs-report/

Covington & Burling LLP. (2023, November 1). Biden Administration releases artificial intelligence executive order. Covington Insights. https://www.cov.com/en/news-and-insights/insights/2023/11/biden-administration-releases-artificial-intelligence-executive-order

Datadog. (2025). LLM observability. https://www.datadoghq.com/product/llm-observability/

Digital Divide Data. (2025, May 21). Horizontal vs. Vertical AI: Which Is Right for Your Organization’s AI adoption strategy? https://www.digitaldividedata.com/blog/horizontal-vs-vertical-ai

European Commission. (2025). FORESIGHT REPORT 2025. https://commission.europa.eu/document/download/bdba60f0-abb3-42f8-b5be-fd35d693b289_en?filename=SFR2025-Report_web.pdf

FTSG (Future Today Strategy Group). (2025). 18th edition – 2025 tech trends report. https://ftsg.com/wp-content/uploads/2025/03/FTSG_2025_TR_FINAL_LINKED.pdf

Forbes. (2025, April 24). These Jobs Will Fall First As AI Takes Over The Workplace. https://www.forbes.com/sites/jackkelly/2025/04/25/the-jobs-that-will-fall-first-as-ai-takes-over-the-workplace/

Future Today Strategy Group. (2025). 18th edition – 2025 tech trends report. https://ftsg.com/wp-content/uploads/2025/03/FTSG_2025_TR_FINAL_LINKED.pdf

Gary Marcus. (2024). Evidence that LLMs are reaching a plateau [Substack].

Harness. (2025, June 25). The impact of Github Copilot on developer productivity. https://www.harness.io/blog/the-impact-of-github-copilot-on-developer-productivity-a-case-study

IBM. (2024, September 19). What is the EU AI Act? https://www.ibm.com/think/topics/eu-ai-act

Intellias. (2025, June 24). Real-World Examples of Companies Using AI In Supply Chain. https://intellias.com/ai-in-supply-chain/

Interview Kickstart. (2025, September 22). Key Differences Between Horizontal vs Vertical AI Agents. https://interviewkickstart.com/blogs/articles/horizontal-vs-vertical-ai-agents

Intoo. (2025, July 29). Is AI Taking Over Jobs? What Employers Need to Know in 2025. https://www.intoo.com/us/blog/is-ai-taking-over-jobs/

ISO/IEC. (2023). ISO/IEC 23894:2023: Guidance on risk management. https://www.iso.org/standard/77304.html

Klarna. (2025, March 26). Klarna AI assistant handles two-thirds of customer service chats in its first month. https://www.klarna.com/international/press/klarna-ai-assistant-handles-two-thirds-of-customer-service-chats-in-its-first-month/

Labelbox. (n.d.). Labelbox — training data platform.

https://labelbox.com/

Leadership Institute. (2025, July 7). Upskilling in Singapore: Why SkillsFuture-eligible AI courses are key. https://www.leadershipinstitute.sg/upskilling-in-singapore-why-skillsfuture-eligible-ai-courses-are-key/

Microsoft. (2024, March 27). Responsible AI: Ethical policies and practices. https://www.microsoft.com/en-us/ai/responsible-ai

Multimodal.dev. (2025, February 23). Horizontal vs. Vertical AI Agents: The Right Option for Your Organization. https://www.multimodal.dev/post/horizontal-vs-vertical-ai-agents

Nautilus. (2019). Deep learning is hitting a wall. Nautilus. https://nautil.us/deep-learning-is-hitting-a-wall-238440/

OECD. (2025). How artificial intelligence is accelerating the digital government journey. https://www.oecd.org/en/publications/governing-with-artificial-intelligence_795de142-en/full-report/how-artificial-intelligence-is-accelerating-the-digital-government-journey_d9552dc7.html

PMC. (2023, December 10). Intelligent selection of healthcare supply chain mode. https://pmc.ncbi.nlm.nih.gov/articles/PMC10758214/

Responsible AI. (2025, September 15). Home - Responsible AI.

https://www.responsible.ai

Scale AI. (n.d.). Scale — data labeling and AI infrastructure (company materials).

https://scale.com/

Snorkel AI. (n.d.). Snorkel AI — data-centric AI platform.

https://snorkel.ai/

ServiceNow. (2024). Service observability. https://www.servicenow.com/products/observability.html

Simbo AI. (2025, July 3). The Role of Technology and AI in Transforming Healthcare Supply Chain Management for Better Outcomes. https://www.simbo.ai/blog/the-role-of-technology-and-ai-in-transforming-healthcare-supply-chain-management-for-better-outcomes-4213232/

TechDispatch. (2025, September 22). Human Oversight of Automated Decision-Making. https://www.edps.europa.eu/data-protection/our-work/publications/techdispatch/2025-09-23-techdispatch-22025-human-oversight-automated-making_en

Vidizmo. (2025, June 1). Responsible AI Development Services: 10 Best Practices. https://vidizmo.ai/blog/responsible-ai-development

Vktr. (2025, July 29). 10 Jobs Most at Risk of AI Replacement in 2025 (And How To Navigate The Change). https://www.vktr.com/ai-upskilling/10-jobs-most-at-risk-of-ai-replacement/